Custom AI Agents Datasets: How to Collect and Use Them Effectively

AI agents are becoming a core part of how businesses interact with users. They support customer service, qualify leads, schedule appointments, and handle follow ups across channels. When designed well, AI agents create faster responses, consistent interactions, and personalized experiences that improve customer satisfaction and help teams scale their operations.

Behind every effective AI agent sits high quality data. Datasets shape how an agent understands user intent, retrieves information, and responds in real situations. The gap between an agent that feels reliable and one that feels frustrating often comes down to the quality, relevance, and structure of the data it learns from.

Weak datasets lead to unclear answers, incorrect responses, and broken user flows. Strong datasets lead to accurate replies, stable behavior, and agents that users can trust. The training AI agents article introduced the core ideas behind training AI agents, including defining goals, setting instructions, and shaping agent behavior.

This article builds on that foundation by shifting focus to the data itself. It covers how to collect the right datasets, how to structure them for real use cases, and how to apply them inside custom AI agents so training efforts lead to dependable performance in production.

What Constitutes an Effective Dataset for AI Agents

An effective dataset gives an AI agent the right context to perform its role with confidence and consistency. The goal is to provide training material that reflects real user needs, real questions, and real scenarios the agent will face in daily use.

When datasets are thoughtfully built, agents respond with clarity and stay aligned with business goals. Here are the key characteristics of an effective dataset:

Relevance

The data must connect directly to the tasks the agent is expected to handle. For a customer support agent, this includes product details, common issues, refund policies, shipping timelines, and troubleshooting steps.

For a sales or lead qualification agent, the dataset should reflect service offerings, pricing ranges, qualification criteria, and common objections. Irrelevant data adds noise and increases the risk of off topic or misleading responses.

Diversity

Users do not all ask questions in the same way. An effective dataset includes many variations in phrasing, tone, and context. This helps the agent handle short questions, long explanations, casual language, formal requests, and incomplete inputs. Including different regional expressions and common typos also prepares the agent for real conversations that rarely follow perfect grammar or structure.

Accuracy

Training data must be clean, current, and aligned with official business information. Outdated policies, incorrect facts, or conflicting answers weaken trust and create user frustration. Every entry in the dataset should be reviewed for correctness and consistency. Clear source alignment across product documentation, internal guidelines, and support workflows helps ensure the agent provides reliable responses during live interactions.

Methods to Collect Datasets

Collecting strong datasets starts with using the data sources your business already owns. Stammer AI provides several practical ways to gather and organize training material so AI agents can learn from real interactions and reliable internal resources.

Text Based Data Collection

Customer interaction logs, chat transcripts, and CRM records offer a direct view into how users ask questions and what they expect in return. These sources reveal common requests, recurring issues, and typical response patterns used by support or sales teams. Cleaning and organizing this data helps AI agents learn how real conversations flow and how to respond with relevant information.

File Uploads

Many teams already store valuable knowledge in PDFs, spreadsheets, manuals, and internal guides. These documents can be uploaded through Stammer AI file upload features and used as reference material for AI agents. Organizing files by topic or product line improves retrieval quality and helps agents return precise answers during live interactions.

Q and A Dataset Collection

Customer service transcripts and FAQ pages provide a natural source for building question and answer pairs. Structuring these into clear input and output examples helps AI agents map user intent to accurate responses. Grouping similar questions together also improves consistency and reduces confusion when users phrase requests in different ways.

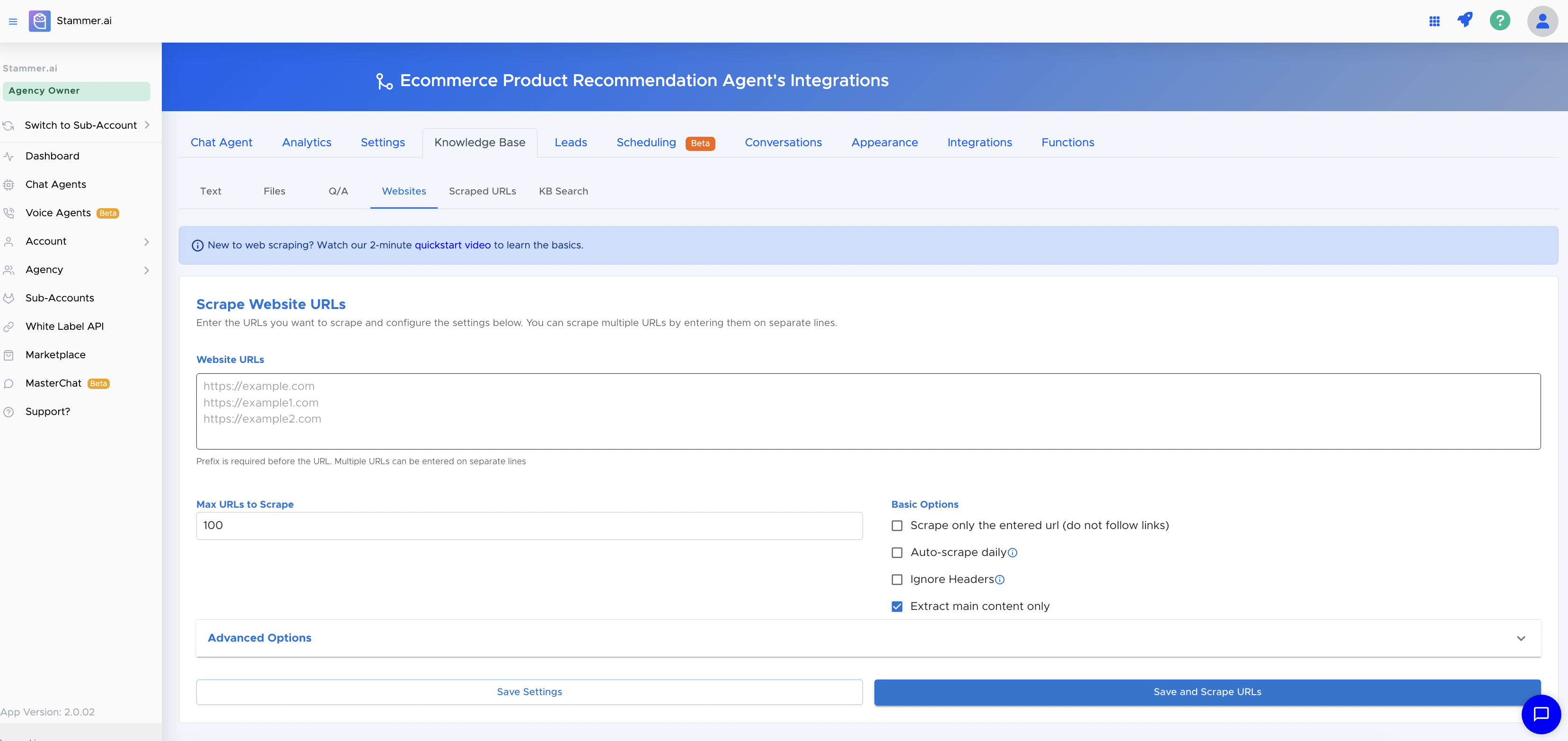

Website Content Scraping

Public websites often hold up to date product details, service descriptions, pricing information, and policy updates. Scraped URLs allow teams to collect this content and use it as part of the training dataset. Regular refresh cycles keep agents aligned with current information so responses stay accurate over time.

Knowledge Base Search KB Search

Internal help articles, support tickets, onboarding guides, and product documentation can be organized into a searchable knowledge base. This setup allows AI agents to query internal resources during conversations and return precise answers drawn from approved content. A well structured knowledge base improves response reliability and supports real time information retrieval across use cases.

Preprocessing and Organizing Datasets

Raw data rarely works well in its original form. Preprocessing and organizing datasets helps AI agents learn from clean, structured, and meaningful information. This step improves response quality and reduces confusion during live interactions.

Data Standardization

Text data should follow a consistent format before it enters training workflows. This includes cleaning extra symbols, removing duplicated entries, fixing spelling errors, and aligning naming conventions across files and records. Consistent formatting helps the agent recognize patterns across similar inputs and improves retrieval accuracy during conversations.

Categorization

Grouping data into clear categories helps structure training material around real use cases. Common categories include FAQ content, customer service logs, and product documentation. This structure improves coverage across support topics and makes it easier to expand or update specific areas without disrupting the full dataset.

Annotation for Clarity

Labeling and tagging data adds helpful context for training. In Q and A datasets, tags can indicate user intent such as billing questions, technical issues, onboarding requests, or account updates. Clear labels help agents distinguish between similar questions that require different responses and improve intent matching during live use.

Handling Missing Data

Incomplete records reduce training quality and can lead to gaps in responses. Review datasets for missing fields, partial answers, or unclear entries. In some cases, missing values can be filled in using verified internal sources. In other cases, removing low quality entries protects overall dataset integrity and keeps training results stable and reliable.

Structuring Datasets for Optimal AI Training

A well-structured dataset allows AI agents to learn efficiently and deliver accurate, relevant responses. Structuring goes beyond collecting data; it defines how the information is organized and accessed during training.

Hierarchy and Depth

Organizing data in a hierarchical structure helps AI agents navigate between general and specialized knowledge. General knowledge includes common queries and responses such as basic customer questions or introductory product information.

Specialized knowledge covers industry-specific or product-specific details and is often managed within a Knowledge Base (KB Search). This separation ensures agents can respond accurately at different levels of complexity and maintain consistency across topics.

Data Labeling and Tagging

Tagging datasets by intent, sentiment, and priority allows agents to handle a wide range of interactions with context awareness. Intent labels define the purpose of a user query, sentiment tags provide insight into emotional tone, and priority tags guide the agent in addressing urgent issues first. Clear labeling improves model understanding, reduces misinterpretation, and supports nuanced responses that align with business goals and user expectations.

Leveraging Real-Time Data for Continuous Learning

AI agents perform best when their knowledge reflects current information. Integrating real-time data and creating feedback mechanisms ensures agents stay accurate and useful over time.

Live Data Integration

Feeding live data into AI agents keeps responses relevant and up to date. Scraped URLs and website content provide a continuous stream of new product details, updates, and policies. Incorporating this information into training material allows agents to answer queries with the latest information without requiring manual intervention.

Updating Knowledge Bases

Regularly reviewing and refreshing the KB Search ensures internal knowledge remains current. Adding new help articles, FAQs, troubleshooting guides, and product updates allows agents to access accurate answers across all supported topics. Maintaining an organized knowledge base reduces outdated responses and improves confidence in AI recommendations.

Feedback Loops

Real user interactions generate valuable new data. By analyzing conversations, common questions, and missed queries, teams can identify gaps or areas for improvement. This data can then be added to training datasets, refining the agent’s performance over time. Continuous retraining based on user feedback ensures AI agents adapt to evolving needs and maintain high reliability.

Stammer AI Dashboard Tools

Stammer AI provides integrated features to manage and enhance datasets with ease. The Text module allows teams to organize chat logs and CRM records, while Files supports PDFs, spreadsheets, and manuals for training.

Q/A tools help structure question-answer pairs, and Websites and Scraped URLs bring in current content from public sources. The KB Search feature organizes internal knowledge and ensures agents can retrieve accurate information in real time. Together, these tools allow teams to maintain a complete, structured dataset ready for AI training.

Conclusion

Creating effective AI agents is an ongoing effort. Observing how agents perform, learning from user interactions, and making small improvements over time can help them become more useful and reliable. A careful and steady approach ensures AI agents continue to support business needs and provide value to users.